Updated: Category: Strategy

2020 has been a difficult year, and one of many surprises. Who would have thought that all tech conferences and events would go virtual (or away, sadly) over the course of just a few months, working from home would become the new norm, and air travel would shrink by 90%? 2020 has also been a stark reminder for individuals and enterprises alike that not everything can be calculated and predicted. So-called Black Swan events do occur and test our preparedness for the unforeseen.

Many organizations were thrown into a disruption that was more severe and happened faster than anything they had experienced or anticipated. Those who had optimized their operating model for the current, presumably steady, state found it particularly difficult to respond to a new environment that combined a sudden departure from the status quo with extreme levels of uncertainty for the path forward. It’s like neither knowing where you are nor what lies ahead.

Making Better Decisions

There’s enough folks selling enterprise decision snake oil, promising you the magic powers of making the right decisions. Instead, I want to share the kind of conversations that I had about how to make better (not necessarily “right”) decisions in times of significant uncertainty.

Tackling Uncertainty with Models

The most common fallacy when making decisions, as explained in The Software Architect Elevator, is judging the quality of a decision by its outcome. When something good happens, it could be that you made a bad decision and were just lucky. Danny Kahneman’s Thinking, Fast and Slow elaborates on roughly 500 pages how horrible decision makers humans are, so you’re not alone if you occasionally fall into this trap or one of the many others.

Luckily, there’s a way to drastically improve the quality of decision making, without snake oil, and even without a Blockchain: by using models, decision models to be more precise. It’s been shown many times that even, and often especially, simple models help you make better decisions. My go-to resource for improving decision-making with models is Scott Page’s Model Thinker (which is included in my Architect Bookshelf) and his related Coursera course.

Stacking Models

Scott’s book includes the notion of Many Model Thinkers, folks who apply more than one model to a given problem. Applying more than one model can help avoid blind spots—one model can fill a gap left by another one—while keeping the models simple.

The benefits of using multiple models don’t just apply to individuals but to organizations alike. Especially when faced with high degrees of uncertainty, as many organizations surely are now, one model-size won’t fit all. Likewise, you can use a model to decide between using different decision models for different circumstances.

How “Right” Can You Be?

Most experienced architects know that being “right” is relative. It’s more about having a good frame of mind and data at hand to make an informed and rational decision. The degree of uncertainty (and the complexity of the system you are looking at) will influence how you can approach a decision. The danger is that high degrees of uncertainty could lead you to just “wing it” because you don’t know what’s going to happen anyway. Unless you fall outside of Danny Kahneman’s sphere of analysis, that’s the most reliable way to make a poor decision.

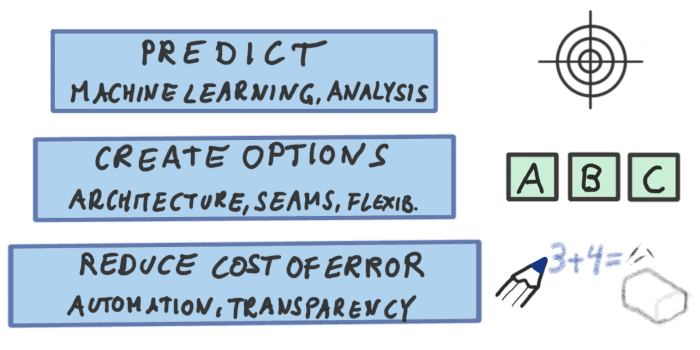

When faced with varying degrees of uncertainty I hence advise to my customers to structure their thinking along three distinct buckets:

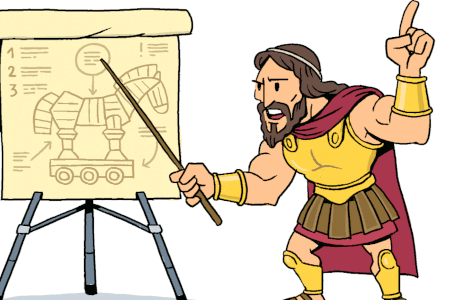

Being “right” in the face of uncertainty.

You can try to predict the “right” answer, you can prepare options for multiple scenarios even if you are unsure which one will occur, or you can better prepare for being wrong, which will likely happen sooner or later. Although each approach is relatively straightforward, reminding people that they work hand-in-hand helps them become more effective multi-model thinkers.

Predict the Outcome

Dealing with uncertainty has much improved in recent years thanks to the broad availability of machine learning technology, which includes pre-trained models, open-source frameworks such as TensorFlow, and fully managed environments like Amazon SageMaker.

The most frequent mistake I have seen organizations make when deploying machine learning models for prediction, is expecting these models to be highly accurate. Wouldn’t it be nice if we could just predict most things – after all this is artificial intelligence! Unfortunately, although prediction models can be very valuable, they’re not a crystal ball. Trying to make them into one usually leads to over-fitted models that don’t actually predict much besides the past that they were trained on.

So, use machine learning models on the data you have but be prepared to supplement them with additional mechanisms to handle the remaining uncertainty.

Create Options

Most of my readers will know that I like creating options, so much in fact that I routinely liken architecture to be the business of creating and selling options - a much expanded version of this post is part of The Software Architect Elevator.

Options are great when you can’t predict what’s going to happen but when you have a reasonable idea of the discrete choices that the future path will fall into. Are you unsure about the workload your application will have to handle? Create the option for elastic scaling, e.g. by deploying in the cloud or on a serverless platform. Might need to migrate to a different database? An Object-Relational mapping layer will create some options for you.

Just like a negative outcome doesn’t imply a poor decision, an unused option also isn’t wasted. Whether to “buy” an option sold by the architects depends on the option’s cost (usually build effort, complexity) and benefit (determined by the likelihood that it’ll be needed and the time / cost reduction of having it in place).

Options work best when the uncertainty can be modeled in a specific dimension, such as scale (# of servers / instances). Also, options aren’t free. Trying to create options for all possible circumstances will make you drown in complexity, articulated by what I lightheartedly call “Gregor’s Law”:

Excessive complexity is nature’s punishment for those who are unable to make decisions.

So, you better accept that you’ll be wrong many times despite having good prediction and having several options ready.

Reduce the Cost of Error

Luckily, the battle isn’t lost—you can make being wrong a lot less troublesome if you are well prepared. No one mids being wrong if it doesn’t cost much. Forgot to include a feature that’s now urgently needed? Having a well-oiled software delivery pipeline with automated builds and tests at hand makes this much less of a drama than being locked into a five-year outsourcing contract that’s just waiting for an opportunity to negotiate a change order (here’s the sound of that).

The key instruments of reducing the cost of error are transparency and automation. Transparency allows you to detect the error early and to understand the nature of it. Automation makes implementing a solution fast and repeatable.

Maintaining Balance

Reducing the cost of being wrong isn’t an open invitation to a lucky-go-happy approach to IT management. Or as I often comment about agile methods:

Agile reminds us that we don’t know everything. It doesn’t tell us to pretend we know nothing.

Reducing the cost of error is your fallback, not a substitute for prediction and having good options at hand. The simple model reminds is that we have three mechanisms at hand and that we should use them all in a balanced manner. What percentage of time you are able to predict and how many options you will need will vary from one organization to another. However, it’d be unlikely that you won’t need one option at all.

Strengthening the Metaphor

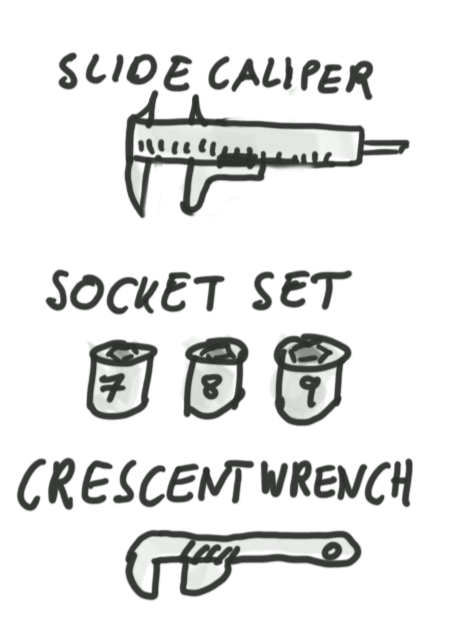

Architects love metaphors (for a fun instance of juggling architecture metaphors have a listen to my podcast with Neal Ford and Mike Mason), so it might be possible to make the three levels more memorable. Like any good metaphor, the elements should come from the same domain. Let’s try tooling:

- You could try to measure exactly and make use of a precise tool. Prediction is like using a caliper: you know what your will get.

- You can have a set of tools available and see which one fits - having options is like a socket wrench set (standardization increases the success rate)

- A crescent wrench is a good catch all for all the stuff that fits neither—it reduces the cost of error.

The metaphor also shows that a crescent wrench is bulky and doesn’t fit as well as a socket wrench, so it’s a fallback, not a substitute. And a socket set isn’t great if you are trying to loosen that 1/2” nut with a 13mm socket and strip it in the process. Measuring would have been better in that case (global standards would have helped also, but as long as some countries keep writing on “Letter” paper, we won’t hold our breath).

Are Simple Models Obvious?

More likely than not, you have used prediction and options when defining your IT strategy. And likely you have used automation to reduce the cost of change, or of being wrong. So, does the model shared in this post still bring value? I believe so. Good models aren’t there to bring you never-before-seen-ideas, instead routinely building on things you already knew or already used.

However, the models help you structure and communicate your thinking. For example, if a client is intent on refining their prediction model yet again, you could highlight that it might be useful to invest into options or reducing the cost of error instead. This isn’t telling the customer that they are wrong, but it gives them a better horizon to think about their situation. It’s a classic architect Zoom-out maneuver.

In times of increasing complexity, increasing pace, and increasing uncertainty, structuring and communicating thinking becomes more valuable than ever. And often it’s achieved with simpled models that might even be labeled “obvious” in hindsight. I love introducing things that are obvious once done but that weren’t used beforehand. It’s one of the most valuable things you can bring into an organization.

Grow Your Impact as an Architect

The Software Architect Elevator helps architects and IT professionals to take their role to the next level. By sharing the real-life journey of a chief architect, it shows how to influence organizations at the intersection of business and technology. Buy it on Amazon US, Amazon UK, Amazon Europe